[This is just one of many articles in the author’s Astronomy Digest.]

The aim of autoguiding is to correct for tracking errors in the mount and so allow longer exposures to be taken. When astroimagers were using CCD cameras, their relatively high read noise did mean that longer exposures gave a better result than a similar total exposure made up of a greater number of shorter exposures. There was a second problem. Using a first generation USB connection, the upload times were quite long – 8 seconds for my CCD camera and this meant that unless quite long exposures were used the actual time imaging would be reduced. So autoguiding was a very useful tool.

Things have changed. The latest CMOS camera have a far lower read noise and, using a USB3 interface, upload images far faster so using short exposures – say of 30 to 60 seconds – works pretty well and, depending on the sky glow (light pollution) levels, may even be better. [The ‘Brain’ in SharpCap can analyse an image of the sky background and suggest a suitable length of exposure. Looking north towards Manchester, it suggested an exposure of 10 seconds!]

So it may well be that, when using CMOS cameras, autoguiding will not be necessary as most accurately aligned mounts can track sufficiently well to allow exposures of up to 60 seconds without introducing star trailing when used with telescope of less than 800 mm. In fact, in this case there can actually be some advantages of not using autoguiding. Unless the tracking and alignment of the mount is perfect (which it will not be unless one is using a very high performance mount perhaps using absolute encoders) then during, say, a total of one hours total exposure the image will slowly drift across the camera sensor. This is a good thing. If using an RGB sensor, it will help remove the effects of what Tony Hallas calls ‘Colour Mottling’ – slight variations in pixel sensitivity over regions of the sensor of order 20 pixels across – so that the final image background is not a uniform grey. If hot pixels are present, they will produce a trail across the image but these will not integrate up in brightness as will the faint stars and so may well be lost in the noise background. [This is not to say that one should not take and use ‘Dark Frames’ as these will remove both hot pixels and ‘amp glow’.] Because the image is being sampled with different combinations of the RGB colour matrix, the image quality can actually be improved were a one shot colour camera being used and, as the movement across the sensor gives the effect of ‘dithering’, it can even be possible to improve the resolution of the image somewhat by using a ‘x2 drizzle’ mode when aligning and stacking the short exposure subframes. [This technique was used to enhance the HST images when the first wide field camera was under sampling the image.] Finally, when short exposure subframes are used there is less chance of an individual exposure being ‘photobombed’ by a plane or satellite. [In Deep Sky Stacker, using the Kappa-Sigma stacking mode, satellite trails should be removed as then, if in one subframe a pixel is significantly different in brightness than the average value for that pixel in the resulting stack, its brightness is replaced with the average value – but this does require that a reasonable number of frames have been captured so shorter exposures can help.]

Obviously the drift across the sensor must not be so much that star trailing becomes apparent. This does mean that polar alignment must be pretty accurate. iOptron with their iPolar camera and QHY with their PoleMaster camera can quickly reach a very good alignment as can SharpCap using a guide scope and camera (or the iPolar and PoleMaster cameras). In fact, I find the SharpCap plate solving polar alignment software easier to use than the QHY software. Looking at a single captured frame one can easily see if star trailing is present but one test is to add the first and last images of a sequence each with 50% opacity. It will be obvious how a star has shifted across the sensor and, by cropping the image to just contain the 2 stars, the movement in pixels can be judged. If this movement is divided by the total time of the imaging sequence in seconds one finds the pixel movement per second. Multiplying this by the exposure time gives the movement per exposure. If this is less than ~2 arc seconds then there should be no problem.

There is, however, one result of not autoguiding. The area of the image exposed by all of the subframes will be reduced so the final image will need to be cropped somewhat – how much depending on how well the mount is tracking. [If the tracking is not that good, it may be necessary to realign the telescope at times during a long imaging session.]

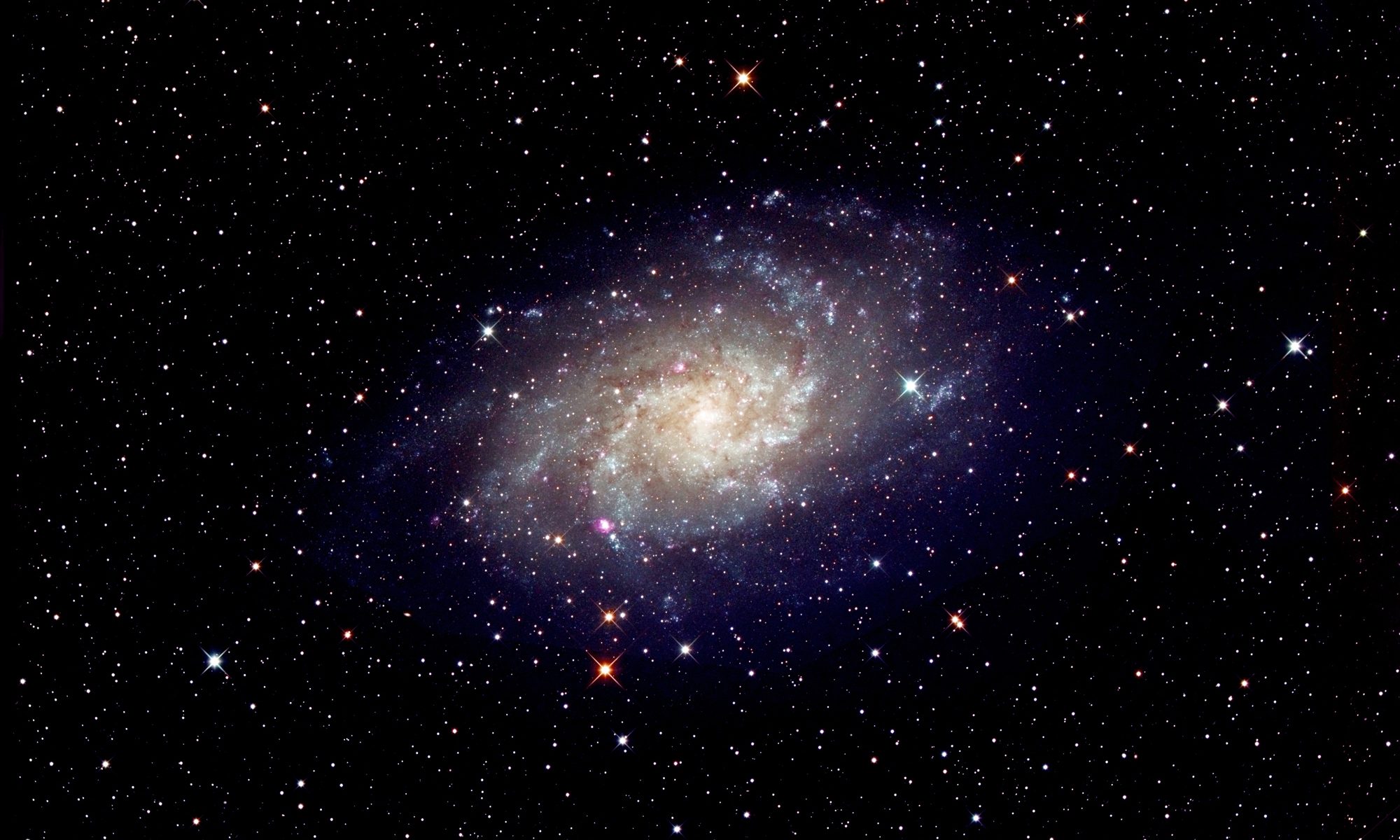

As an example, when imaging M31 with a 90 mm telescope, I realigned the telescope on M31 after each 100 frames of the total 317 frames. In fact my alignment was not that accurate and the shift across the frame was quite significant, but the pixel movement in one 25 second exposure was still just 2 arc seconds.

To avoid the problems of colour mottling when autoguiding is employed, dithering is usually employed if the mount is being controlled by computer using, perhaps, PHd2 guiding.