[This is just one of many articles in the author’s Astronomy Digest.]

The conventional wisdom is that stacking and aligning raw files – which may have a 12 or even 14-bit depth must be better than using Jpegs which have only an 8-bit depth and may well show artefacts. Over the years I have been surprised how well images have turned out when only Jpegs have been taken so decided to carry out a test processing both sets, raw and Jpeg, of 50, 25 second frames, taken with a superb 45mm Zeiss Contax G lens (set to f/4) mounted using an adapter on to my Sony A5000 mirrorless camera. (See article in the digest about this camera.) An ISO of 350 was used. The frames were taken from an urban location (and sadly over the town centre) but with a reasonably transparent sky and an overhead limiting magnitude of 5.5. The frames thus included a significant amount of light pollution. As seen below, I found that the result from using the Jpeg frames appeared more attractive than that derived from the raw frames. In particular, the Jpeg result showed the nebulosity surrounding the Pleiades Cluster far better. Why could this be?

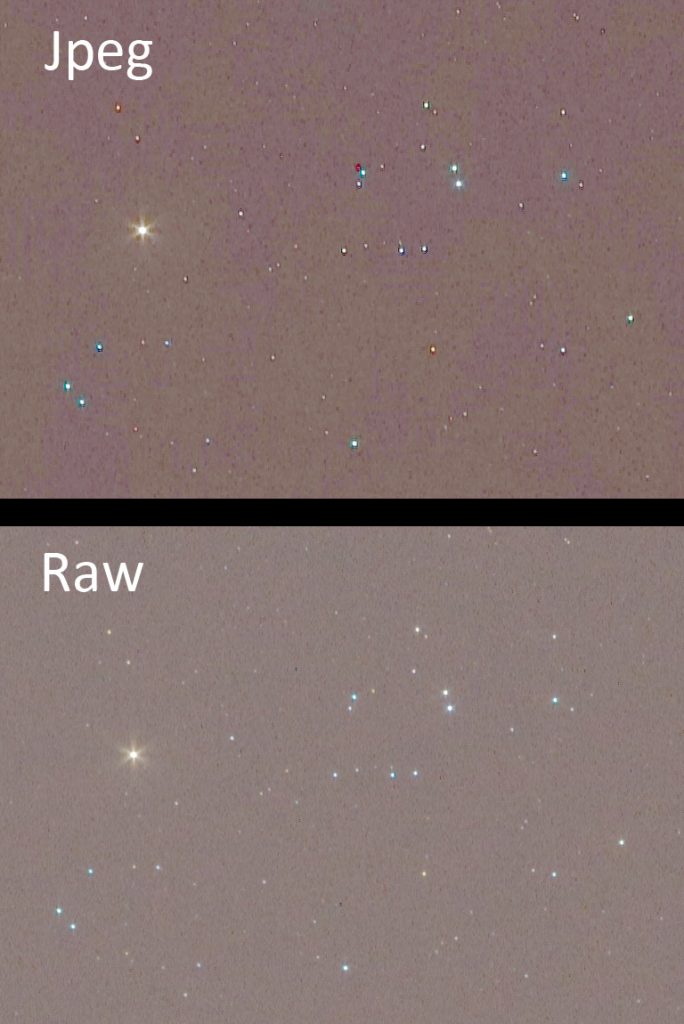

There is absolutely no doubt that a single Jpeg bears no comparison to a single raw image as the crops of the region containing Aldeberan and part of the Hyades Cluster show.

But what if a large number, in this cases 50, Jpegs are aligned and averaged? It turns out that the advantage of raw over Jpeg is greatly reduced.

How Jpegs can rival raw files.

The key point in the description above is that 50 frames were stacked. As I will try to explain, this greatly increases the effective bit depth of the result providing noise is present in the image. So this advantage of using raw files may not be as significant.

The signal relating to each pixel is converted into a digital number in an analogue-to-digital converter. Consider this scenario. Suppose the actual value of the output of a pixel, using a 12-bit converter to digitise the output from a pixel is 221.3. This will be stored in the raw file. If this value were reduced to 8-bits in the Jpeg conversion it could only give integer results of 221 or 222. (The 8-bit range is 0-256.) If there was no noise affecting the output of the pixel the A-D would always give the nearest value which is 221. Obviously not as good. However now let’s add some noise into the signal that is output from the pixel – there will be! The values successively read out from the A-D will now vary, certainly giving values of 221 and 222 and quite likely some (but fewer) values of 220 and 223. Because the real value of the pixel output is 221.3, there will be more readings giving 221 and 220 and fewer of 222 and 223. If a reasonable number of these 8-bit values are averaged – as will happen when a set of Jpeg frames are aligned and stacked using 16 or even 32 bit-depth accumulators, the average will lie closer to 221 than 222 and will likely to be very close to 221.3. So averaging a number of 8 it values where noise is present will increase the effective bit depth of the result so eliminating that advantage of raw files. I promise that this is true.

I also suspect that when converting the sensor data into a Jpeg, some ‘stretching’ of the image is carried out to lift up the fainter parts of the image. This is confirmed as, when processing the raw and Jpeg stacked images, I have had to stretch the raw image further to give a similar result. It is possible that when the camera produces the Jpeg, it may also apply some tone mapping and other effects – this could help to bring out some parts of the image. This sometimes appears to be the case when I have compared single raw and Jpeg frames − not just relating to astrophotography − and will depend on the camera being used. Averaging a number of frames could also probably reduce or remove the Jpeg the artefacts that are present and seen in the single frame above. So it is not impossible that stacking a reasonable number of Jpeg frames (say 30 or more) could actually give a better result − as I believe in this image.

Processing the raw and Jpeg images

Sequator (see article in the Digest) was used – it is very fast. The frames were loaded into Sequator and aligned and stacked without using any of the possible adjustments. The 16-bit Tiff result was then loaded and processed in Adobe Photoshop. However the free program Glimpse could also be used.

The stacked image below showed, as expected, significant light pollution.

Removing the Light pollution

The image was duplicated and the ‘Dust and Scratches’ filter applied with a radius of 30 pixels. The filter ‘thinks’ the stars are dust and removes them but the Pleaides cluster and Aldebaran are still visible.

These were cloned out from an adjacent part of the image (hoping that the light pollution will be similar) and the Gaussian Blur filter applied also with a radius of 40 pixels to give a very smooth image of the light pollution which varies across the frame.

The two layers were then flattened using the ‘Difference’ blending mode with an opacity (default) of 100%. The light pollution is removed from the original frame.

Stretching the image

Either the levels or curves tools can be used. In levels, move the central slider leftwards to 1.2. This brightens up the fainter parts of the image more than the brighter. After a few applications to suit your preference, a further application is used moving the left slider to the right to increase the black point and so remove the noise from the image.

In curves, lift up the left hand part of the curve to have the same effect.

Hot pixels and their removal

I had deliberately slightly misaligned the axis of the nanotracker on the North Celestial Pole. This was done to eliminate what Tony Hallas calls ‘Color Mottling’ (He is American.) − variations in the sensitivity of different colour pixels on scale sizes of ~20 pixels. This can give the background noise, which should be a uniform very dark gray, a random coloured pattern. Because of the misalignment, over the course of the imaging exercise the stars − but not the hot pixels − will move across the sensor. They will thus give a linear track when the frames are aligned and stacked as seen in the image below. Had I used the ‘In Camera Long Exposure Noise reduction’ when the camera follows each light frame with a dark frame, the hot pixels would have been eliminated. But this both adds some noise to each frame but also halves the time imaging the sky! So my Jpeg result showed quite a number of red and blue streaks across the image. I thus had to spend a few minutes ‘spotting’ them out with a black brush. What I do not understand too well is that the result of aligning and stacking the raw frames as described below did not show them. This, I suspect, is because the Jpeg conversion stretched the image and so increased their brightness relative to that in the raw frames. As the misalignment spreads out their light, it could be that they came below the noise level in the raw result.

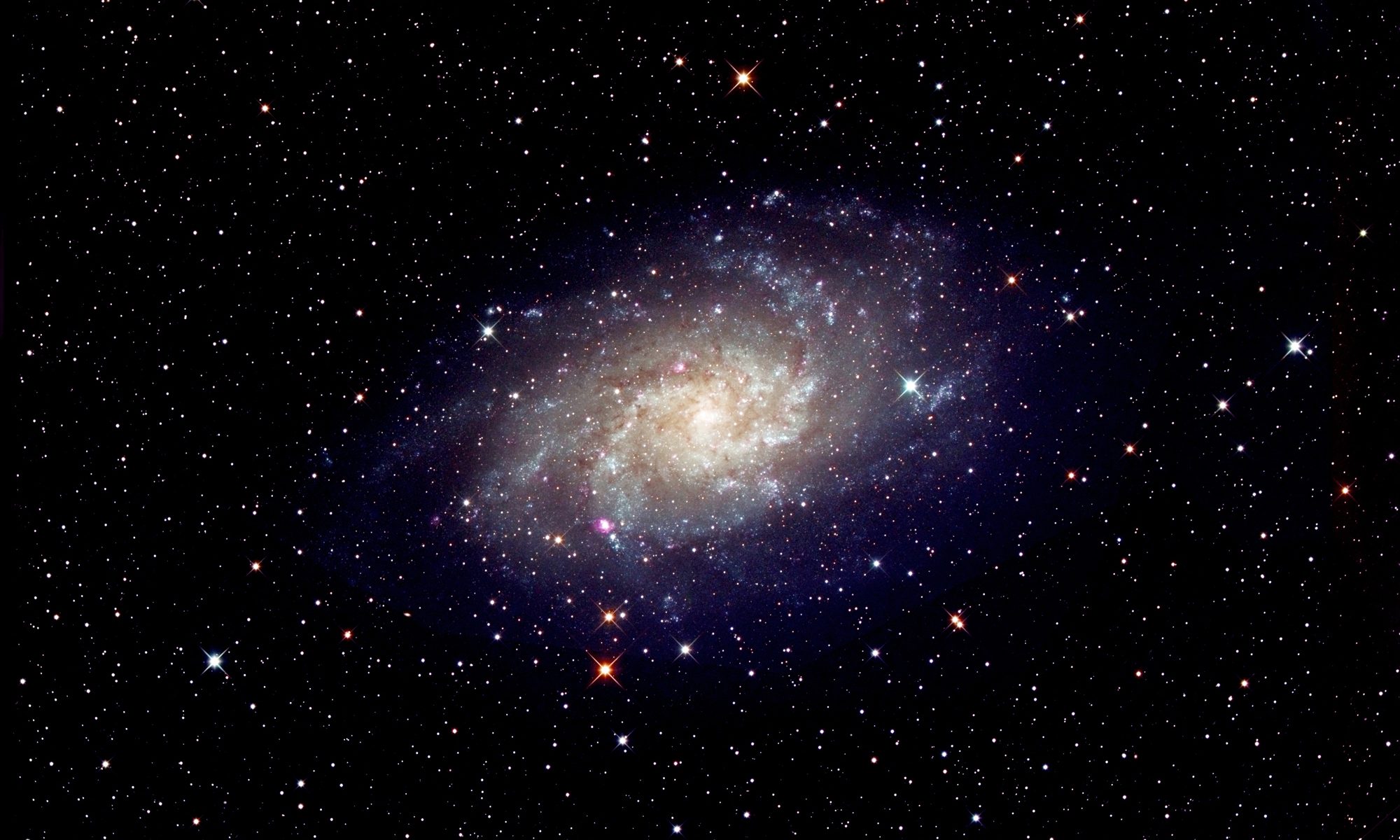

The final Jpeg result

As shown in the image at the head of the article, the six bladed iris of the Zeiss lens gives the brighter stars a 6 pointed diffraction pattern. That of Aldebaran has been ‘softened’ using a local application of the Gaussian Blur filter. There is a small open cluster, NGC 1467 over to the left of Aldebaran. I was really pleased how much nebulosity surrounding the Pleiades cluster had been capture in the Jpeg version.

Processing the raw frames

The Sony camera produces .arw files which can be accepted by Sequator. They can also be converted into .Tiff files in both Adobe Lightroom or Sony’s own raw converter.

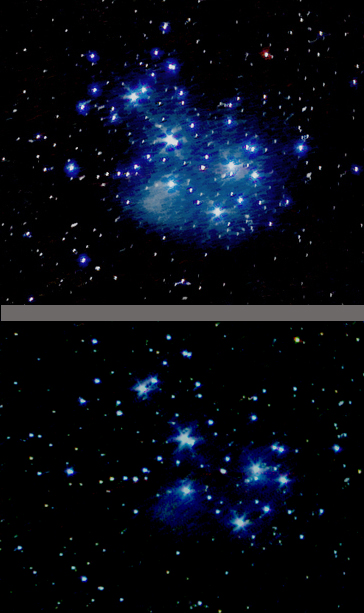

I first loaded the set of raw files into Sequator and removed the light pollution and then stretched the result exactly as for the Jpeg result. I was very disappointed with the result. The crops below show the region of the Pleaides Cluster compared to that of the Jpeg version. The nebulosity is not nearly as well delineated in the raw image below.

In another article in the digest, I have advocated (as have others) first converting the raw frames into Tiff files and then stacking these. Theoretically, the result should be no different, but it is often better. So I did this and aligned and stacked them in Sequator with the result shown below in comparison with the Jpeg result. Better, but still not as good.

I have also aligned and stacked the frames in Deep Sky Stacker and there was no obvious difference.

Conclusion

The results did not totally surprise me as I have had pleasing results when I have only captured Jpeg frames, but the difference was far more than I had expected. I can see, as explained above, why when averaging many Jpeg frames their obvious loss of quality can be partially overcome. But that should not make the result better than stacking raw frames. I can only surmise that the suspected stretching of the image when the camera produced the Jpeg version has helped. In principle, one could do a slight stretch of each Tiff file before the stacking process and that may improve the result. So I tried converting the raw files in Adobe Lightroom applying a tone curve to lift up the fainter parts of the image. The overall result was better but the nebulosity was still not as well captured as with stacking the Jpeg files. It must be possible to get a better result using the raw files – but I do not know how to do it.

The moral is this: build up the sensitivity of the image by taking many (at least 30) short exposures and store both Jpegs and raw files for each frame. Process both and you might find, as in this example, that you prefer the Jpeg result.

A second example

As an imaging exercise, I used a Sony A7 II and Zeiss (East Germany) 200 mm lens to image the Sword of Orion capturing both raw and extra fine Jpegs. 53, 17 second exposures were taken so as not to over expose the central part of the Orion Nebula using an ISO of 2,000. Both raw and Jpeg stacked images were processed identically save for the fact that I had to employ more stretching of the raw image. The results were virtually identical but, in a very tight crop of just the nebula, the Jpeg version showed the faint nebulosity down to the lower right of the nebula better.